jmone

Well-known member

Your footage looked great! I can see it was graded in Resolve (note: one was 24p the other 23.976. Also the HDR meta data was not showing HDR 1000 - it was blank or 0).

I'd previously taken some stock shots comparing SGamut3 / SGamut3.cine / SCinetone using Davinci Colour Managed Workspace. No grade was applied - this is straight out of the camera to render (with SCinetone set to Bybass for colour mgt as this mean to be a ready to go deliverable). This time I rendered it out to HDR1000 in 4:2:0 HEVC (so you may need to download it to play). https://behome.dyndns.info/index.php/s/FKzS9mAPimF2rAW . I left SCinetone in but it really shows how poor it is if you want HDR (not that it is designed for anything other than SDR deliverables).

I then took the same SGamut3.cine clip and used the method you outlined in that post and the rendered it using the same settings as above. https://behome.dyndns.info/index.php/s/AsZCoFDcWLsNZaq

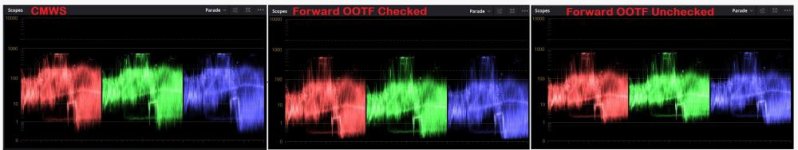

I don't think the CST method outlined gives an accurate starting point for a grade (as an example look at the purple square on the colour checker, it is way off). And your right, checking OOTF on and OFF makes a big difference (See pic). Checked really pushes a lot of detail down into under 1nit..... but unchecked compresses it too much with 1nit looking like a floor almost.

Note: they are all UHD 50fps @80mbs.

I'd previously taken some stock shots comparing SGamut3 / SGamut3.cine / SCinetone using Davinci Colour Managed Workspace. No grade was applied - this is straight out of the camera to render (with SCinetone set to Bybass for colour mgt as this mean to be a ready to go deliverable). This time I rendered it out to HDR1000 in 4:2:0 HEVC (so you may need to download it to play). https://behome.dyndns.info/index.php/s/FKzS9mAPimF2rAW . I left SCinetone in but it really shows how poor it is if you want HDR (not that it is designed for anything other than SDR deliverables).

I then took the same SGamut3.cine clip and used the method you outlined in that post and the rendered it using the same settings as above. https://behome.dyndns.info/index.php/s/AsZCoFDcWLsNZaq

I don't think the CST method outlined gives an accurate starting point for a grade (as an example look at the purple square on the colour checker, it is way off). And your right, checking OOTF on and OFF makes a big difference (See pic). Checked really pushes a lot of detail down into under 1nit..... but unchecked compresses it too much with 1nit looking like a floor almost.

Note: they are all UHD 50fps @80mbs.